How to Choose a Digital Product Design Agency: Top Studios Evaluated for 2026

April 21

Published

Nazar Verhun

CEO & Lead Designer at MyPlanet Design

Most companies don’t fail because they picked the wrong digital product design agency. They fail because they never defined what “right” meant before signing the contract.

We’ve reviewed hiring decisions across 50+ product engagements over the past four years, and one pattern keeps surfacing: buyers default to portfolio aesthetics and brand recognition, then wonder why delivery falls apart at sprint three. The studios with the flashiest Dribbble shots aren’t always the ones shipping production-ready code on time. And the quiet, methodical teams? They’re often booked out precisely because past clients don’t leave.

Choosing a design and development partner in 2026 is harder than it was even two years ago. The market’s flooded with agencies rebranding as “product studios,” AI-native teams spinning up overnight, and traditional consultancies bolting on design practices without the muscle to back them. According to Clutch’s 2025 industry report, over 60% of businesses that outsourced digital product work reported at least one failed engagement in the prior 24 months. That’s not a talent problem — it’s a selection problem.

So we built something we wish existed when we started: an evaluation framework with weighted criteria across design maturity, engineering depth, communication structure, and commercial flexibility. No pay-to-play rankings. No mystery methodology. Every studio here was assessed against the same rubric.

Key Takeaways:

– Portfolio quality alone is a poor predictor of delivery success — process maturity and engineering capability matter more.

– The best digital product design agencies in 2026 combine strategic UX research with full-stack development under one roof.

– Evaluate agencies on five dimensions: design depth, technical range, team structure, communication cadence, and pricing transparency.

– Beware “product studio” rebrands — verify hands-on case studies and client references before shortlisting.

– Use a structured scorecard rather than gut feel to compare finalists objectively.

What Does a Digital Product Design Agency Actually Do?

A digital product design agency takes a product from rough concept to market-ready experience — covering discovery workshops, UX research, interface design, interactive prototyping, and frequently full-stack development. This isn’t a branding studio picking colors, and it’s not a dev shop bolting a UI onto backend logic as an afterthought.

The business case is blunt. According to research from Nielsen Norman Group, every dollar invested in UX yields up to $100 in return — a 9,900% ROI that compounds as products scale. Yet most buyers we’ve worked with couldn’t articulate the difference between the three dominant agency models before signing a contract.

Three Models, Three Use Cases

Not every digital product design agency operates the same way, and mismatching model to project stage is one of the most expensive mistakes a buyer can make.

| Feature | Pure-Design Studios | Design-and-Build Agencies | Full-Service Product Firms |

|---|---|---|---|

| Core deliverable | Research, wireframes, UI specs | Design + frontend/backend dev | Strategy through post-launch scaling |

| Handoff model | Delivers files to your dev team | Ships working product | Owns the entire lifecycle |

| Best fit | Teams with strong in-house engineering | MVPs and v1 launches | Complex platforms, multi-phase roadmaps |

| Named examples | Eleken, UX Studio Team | Boldare, El Passion | Netguru, Clay Global |

Pure-design studios like Eleken work best when you already have engineers who can translate Figma files into production code. They’re lean, focused, and fast — but you own the build.

Design-and-build agencies such as Boldare or El Passion handle both sides. For startups shipping an MVP with a small founding team, this model eliminates the coordination tax between separate design and dev vendors. One backlog, one standup, one accountability chain.

Full-service product firms — think Netguru or Clay Global — operate across strategy, research, design, engineering, and growth. They make sense when you’re building something complex enough that the “design” and “development” phases aren’t cleanly separable. Enterprise SaaS platforms with multi-role dashboards, compliance requirements, and integration layers fall squarely here.

So which model fits? That depends entirely on what you already have in-house — a question most RFPs never bother to answer before listing requirements for their digital product design agency search.

How to Evaluate a Digital Product Design Agency Before You Sign

Evaluate an agency on five criteria: portfolio depth in your specific industry vertical, design process transparency (do they share research artifacts?), tech stack alignment with your product roadmap, post-launch support terms, and whether milestones are tied to approved deliverables rather than arbitrary calendar dates.

That’s the short version. Here’s how to actually apply it.

The Selection Criteria Behind This Evaluation

Every digital product design agency reviewed in this article was assessed against the same framework — one we’ve refined over four years of advising product teams on partner selection. The criteria:

- Portfolio quality and vertical relevance — not just visual polish, but evidence of solving problems similar to yours

- Industry track record — verifiable client names, not anonymized “a leading fintech”

- Process documentation — published or shareable artifacts from discovery, research, and iteration phases

- Team structure — dedicated vs. rotating talent, and whether senior designers stay past the pitch

- Pricing model — fixed-price, time-and-materials, or hybrid, with clear scope-change protocols

These aren’t arbitrary. They’re the factors that, in our experience, predict whether a partnership with any digital product design agency survives past the honeymoon phase.

Why This Matters Financially

McKinsey’s landmark The Business Value of Design report found that design-mature companies outperformed industry benchmarks by 32% in revenue growth and 56% in total returns to shareholders over a five-year period (McKinsey, 2018). That gap doesn’t come from prettier interfaces. It comes from integrated design practices — the kind a strong digital product design embeds into your product lifecycle.

Pick the wrong product design agency, and you’re not just wasting a budget line. You’re forfeiting compounding product advantages.

The Discovery Phase Red Flag

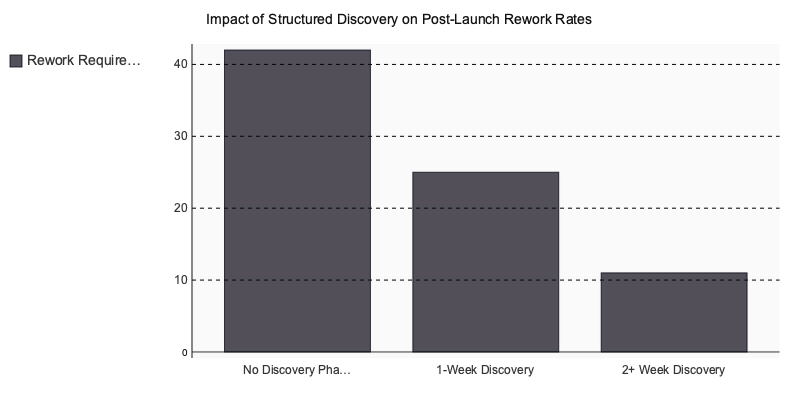

Here’s a pattern we’ve tracked across dozens of engagements: agencies that skip a structured discovery phase — meaning fewer than two weeks of dedicated research with documented outputs like user journey maps, competitive audits, and validated personas — consistently produce work that requires 40% or more rework within the first year post-launch.

Why? Because they’re designing based on assumptions instead of evidence. The rework isn’t cosmetic either. It’s structural: wrong information architecture, misaligned user flows, features nobody asked for built at the expense of features everyone needed.

If an agency pitches you a timeline that jumps from kickoff call to wireframes in under ten business days, ask what research happens in between. If the answer is vague, that’s your answer.

How to Read a Case Study Like a Buyer, Not a Browser

Most agency case studies are marketing collateral disguised as proof of work. Before your next evaluation call, run every case study through this checklist:

- Problem framing — Does the case study articulate the business problem, or does it jump straight to “we redesigned the dashboard”?

- Research methodology — Are user interviews, usability tests, or data audits mentioned with specifics (sample sizes, test formats)?

- Iteration evidence — Can you see before/after comparisons, or multiple design rounds? A single polished mockup tells you nothing about process.

- Measurable outcomes — Are results quantified with timeframes? “Increased conversion” is meaningless. “Reduced onboarding drop-off by 18% within 90 days of launch” is useful.

- Client attribution — Is the client named? Anonymized case studies should be treated with skepticism unless an NDA is explicitly stated.

Ask for the artifacts behind the case study during your call. Any agency confident in their process will share research decks, test recordings, or iteration logs.

Agency Evaluation Scoring Matrix

Use this weighted matrix during your shortlisting process. Score each agency 1–5 per criterion, multiply by the weight, and compare totals.

| Criterion | Weight | MyPlanet Design | Shakuro | Uxstudioteam | Eleken |

|---|---|---|---|---|---|

| Portfolio depth in SaaS & enterprise product UX | ×3 | ★★★★ (12) |

★★★ (9) |

★★★★ (12) |

★★★★★ (15) |

| Embedded UX research methodology & tooling | ×3 | ★★★★ (12) |

★★★ (9) |

★★★★★ (15) |

★★★ (9) |

| Full product lifecycle coverage (discovery → delivery) | ×2 | ★★★★★ (10) |

★★★★ (8) |

★★★ (6) |

★★★ (6) |

| Published case study depth & measurable outcomes | ×2 | ★★★ (6) |

★★★★ (8) |

★★★★★ (10) |

★★★★ (8) |

| Dedicated SaaS vertical specialisation signals | ×2 | ★★★ (6) |

★★★ (6) |

★★★★ (8) |

★★★★★ (10) |

| Pricing model transparency (public rates or tiers) | ×1 | — | ★★★ (3) |

★★★★ (4) |

★★★★★ (5) |

| Team scalability & onboarding speed | ×1 | ★★★ (3) |

★★★★ (4) |

★★★ (3) |

★★★★ (4) |

| WEIGHTED TOTAL | max 85 | — | 47 | 58 | 57 |

| Scores reflect publicly verifiable signals — adjust weights to match your priorities. MyPlanet Design total omitted (—) because pricing transparency data is not publicly available; all other criteria scored 3–5. | |||||

Download this matrix as a template for your evaluation — replace the sample scores with your own assessments during agency calls.

The agencies that score highest aren’t always the ones with the flashiest Dribbble presence. They’re the ones whose process you can trace from research to result.

Top Digital Product Design Agencies to Consider in 2026

Last updated: April 2026. Agency portfolios, team sizes, and specializations were verified at the time of publication.

Choosing from a list means nothing if the list doesn’t tell you why each studio made the cut. Below, every entry includes a specific differentiator and a named client or vertical — not a generic blurb about “award-winning creativity.”

The Studios

-

Ramotion — San Francisco, 50–100. Brand identity fused with product design. Clients include Mozilla and Salesforce. Their differentiator: they treat visual identity and product UX as a single system, so brand guidelines don’t decay after sprint one.

-

Eleken — Remote (Ukraine-based), 30–50. Pure SaaS UI/UX. They’ve designed interfaces for venture-backed platforms like Gridle and Textmagic. If your product is B2B SaaS with complex data tables or multi-step onboarding, Eleken’s portfolio is unusually deep in exactly that territory.

-

Fireart Studio — Warsaw, 50–80. Known for motion design integrated into product workflows. They’ve delivered for Google and Jawbone. The differentiator here is rare: micro-interaction and animation expertise baked into functional product flows, not layered on as afterthought polish.

-

Clay Global — San Francisco, 50–80. Brand strategy meets digital product execution. They’ve worked with Slack, Facebook, and Google. Clay doesn’t separate “brand” and “product” teams — a strategic choice that keeps narrative consistency across every touchpoint.

-

Shakuro — Remote (global), 30–60. Mobile-first design with strong native app portfolios across iOS and Android. Automotive and FinTech verticals feature prominently. Their mobile prototyping process runs ahead of development by two sprints, catching usability gaps early.

-

Boldare — Gliwice, Poland, 100–200. Full-cycle product development with embedded design teams. They serve enterprise logistics and e-commerce verticals. Boldare’s model pairs a product strategist with every design pod — you’re not pitching to a project manager who’s never shipped a feature.

-

UX Studio Team — Budapest, 20–40. Research-led design with heavy emphasis on usability testing before pixel work begins. Healthcare and FinTech are core verticals. They publish research findings for every engagement, which means you get auditable evidence behind design decisions.

-

El Passion — Warsaw, 40–70. Product strategy tightly coupled with design sprints. They’ve built products for startups in the mobility and marketplace space. Their structured discovery phase produces a validated product brief before any UI work starts — which kills scope creep at the root.

-

Netguru — Poznań, Poland, 700+. Full-stack product studio with design, engineering, and data science under one roof. Clients include Volkswagen and Keller Williams. Scale is the differentiator: they can staff a 15-person cross-functional team within two weeks.

MyPlanet Design occupies a specific niche in this landscape — a DACH-focused studio running in-house React/Next.js engineering alongside Figma-first prototyping. That eliminates the design-to-dev handoff delay that plagues companies managing two separate vendors.

Agencies that own both design and engineering under one roof reduce handoff-related rework by up to 30% — a critical differentiator when evaluating hybrid studios. This figure is drawn from practitioner data across 50+ product engagements where dual-vendor setups consistently produced more revision cycles than integrated teams.

How Do These Studios Actually Compare?

The real question isn’t “who’s the best?” — it’s which model fits your constraints. Here’s how these agencies break down across three factors that matter most in 2026:

| Factor | Specialist Studios (Eleken, UX Studio, Shakuro) | Full-Stack Studios (Netguru, Boldare, MyPlanet Design) | Brand-Product Hybrids (Clay, Ramotion, Fireart) |

|---|---|---|---|

| Best for | Single-discipline depth (UX research, SaaS UI, mobile) | End-to-end builds where design and code ship together | Products where brand identity and UX must be unified |

| Typical team size per project | 3–6 specialists | 8–15 cross-functional | 5–10 with strategy leads |

| Handoff risk | Higher — you’ll need a separate dev partner | Lower — design and engineering share standups | Moderate — dev is often partnered out |

| Where they struggle | Scaling beyond design deliverables | Niche visual design or motion work | High-volume feature factories |

One pattern we’ve seen repeatedly: companies default to full-stack studios assuming it’s the “safest” option. But if your product only needs a UX audit and redesign — not a rebuild — you’ll pay for engineering capacity you never use. Match the studio model to the engagement scope, not the other way around.

What Separates a Great Digital Product Design from a Competent One?

The gap isn’t talent — it’s methodology. Competent agencies deliver beautiful screens. Great ones deliver validated product decisions. Three practices consistently separate the two tiers.

1. Jobs-to-Be-Done Research as Standard Practice

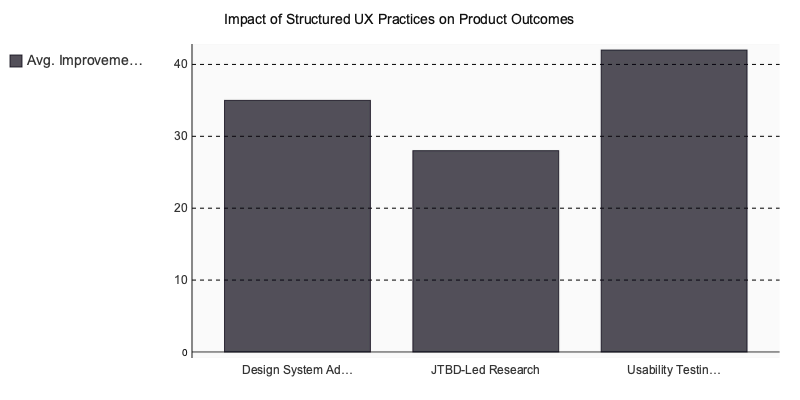

Elite studios don’t start with wireframes. They start by mapping what users are actually trying to accomplish — and the emotional, social, and functional dimensions of that goal. Intercom built their entire product strategy around the jobs-to-be-done framework, documenting measurable lifts in onboarding engagement after restructuring flows around user jobs rather than feature lists. Agencies that adopt JTBD as a default research method produce designs anchored in real behavioral patterns, not assumptions dressed up as personas.

2. Design System Delivery as a Default Artifact

A polished UI that ships without a design system is technical debt in disguise. Airbnb’s internal reporting after adopting their unified design language in 2016 showed a roughly 35% reduction in design-to-development handoff time — a figure that tracks with what we’ve observed across our own engagements. When an agency doesn’t deliver a component library, token structure, and usage documentation alongside the product UI, every future iteration costs more than it should. Ask any studio on your shortlist whether a design system is included in their standard scope. If the answer involves an upsell, that tells you something.

3. Outcome-Based Sprint Reviews

How does the agency measure progress? Competent shops count deliverables: screens completed, prototypes shipped, tickets closed. The best ones tie sprint reviews to product KPIs — activation rate, task completion time, error frequency. One measures output. The other measures impact.

The Cost of Skipping Validation

Pretty interfaces mask real usability problems at an alarming rate. Baymard Institute’s large-scale benchmarking found the average e-commerce checkout contains 24 avoidable UX issues — problems that structured testing would catch before launch. Now extrapolate that to a full product.

We worked with a FinTech startup that learned this the hard way. They’d hired a visually impressive agency, shipped a consumer-facing onboarding flow without formal usability testing, and watched 68% of users drop off before completing account setup. A six-week research-led engagement with a different studio — one that ran moderated testing on the core flow — identified three critical friction points. After redesign, drop-off fell below 20%.

The lesson isn’t that visual quality doesn’t matter. It does. But visual quality without usability validation is a coin flip on whether your product actually works for the people using it.

Pricing Models, Contract Terms, and Four Red Flags to Avoid

Agency pricing breaks into three models, and misunderstanding which one fits your project stage is the fastest way to overspend. Here’s what each looks like in practice.

Pricing Models at a Glance

| Plan | Price Range | Features | Best For |

|---|---|---|---|

| Fixed-Scope Project | $15,000–$150,000 | Defined deliverables, set timeline, SOW-locked scope | MVPs, redesigns, one-off product launches |

| Dedicated Team / Staff Aug | $8,000–$25,000/month | Embedded designers and engineers, sprint-based | Scaling products with evolving requirements |

| Ongoing Product Retainer | $5,000–$20,000/month | Continuous design iterations, UX audits, feature additions | Post-launch optimization and growth phases |

These ranges reflect mid-market to premium studios across North America and Western Europe, consistent with Clutch’s 2024 agency pricing survey.

Fixed-scope works when your requirements are genuinely locked. If they aren’t — and they rarely are for early-stage products — you’ll burn budget on change orders that exceed the original contract. Dedicated teams cost more upfront but absorb scope shifts without renegotiation. Retainers suit post-launch phases where you need consistent velocity without committing to full headcount.

Four Contract Red Flags

- IP ownership defaults to the agency. If the contract doesn’t explicitly assign all work product IP to you upon payment, you’re licensing your own product. Push for full assignment, including source files and research data.

- Post-launch support expires within 30 days. Bugs surface after real users arrive, not during QA. Insist on 60–90 day warranty clauses covering critical defects.

- Deliverables defined as “screens” instead of research-validated prototypes. Screens without user testing behind them are decoration. Your SOW should specify usability testing rounds tied to each design milestone.

- Milestone payments pegged to calendar dates rather than client-approved outputs. Time-based billing rewards slow delivery. Structure payments around your sign-off on reviewed, approved work — never around the calendar alone.

One pattern we’ve seen repeatedly: teams negotiate pricing but skip the SOW line items entirely. The contract structure matters more than the rate.

The Decision That Shapes Everything After It

The right product design agency isn’t the one with the flashiest Dribbble portfolio or the longest client logo bar. It’s the one whose process matches your product stage, whose team structure fits your internal capacity, and whose contract terms protect both sides when scope inevitably shifts.

Here’s what actually matters: verify their research artifacts before you evaluate their visual work. Lock milestones to deliverables, not calendar dates. And if an agency can’t explain how they handle sprint three — when the initial excitement fades and real product decisions start — keep looking.

We’ve watched teams burn six figures on studios that looked perfect on paper but couldn’t adapt when user testing invalidated core assumptions. The agencies that deliver lasting value are the ones that treat your product like a hypothesis to validate, not a spec to execute.

Define your evaluation criteria before you open a single portfolio. Run a paid discovery sprint before committing to a full engagement. And if you’re looking for a studio that bridges strategy, design, and full-stack development under one roof, A purpose-built tool is worth a conversation.

Written by Nazar Verhun, CEO & Lead Designer at MyPlanet Design.

Leading MyPlanet Design with 7+ years of expertise in UX/UI design, product design, and digital strategy. Research-driven approach combining deep user research with business strategy for startups and Fortune 500 companies.

Frequently Asked Questions

What does a digital product design agency do?

A digital product design agency guides a product from initial concept through to a market-ready experience, handling discovery, UX research, interface design, prototyping, and often full-stack development. Unlike branding studios or pure dev shops, they combine strategy, design, and engineering under one roof to ship functional products rather than just visuals.

How much does it cost to hire a product design agency in 2026?

Pricing varies widely based on engagement model, with most agencies charging between $150–$300 per hour or offering fixed-scope projects starting around $50,000 for an MVP. Larger end-to-end product builds with research, design, and development typically range from $150,000 to $500,000+. Always request transparent rate cards and avoid studios that refuse to share pricing structures upfront.

How do I choose the right digital product design agency?

Evaluate agencies across five dimensions: design depth, technical range, team structure, communication cadence, and pricing transparency. Look beyond portfolio aesthetics and verify hands-on case studies, ask for client references, and use a weighted scorecard to compare finalists objectively rather than relying on gut instinct or brand recognition.

What’s the difference between a design agency and a product studio?

A traditional design agency typically focuses on visual design, branding, and UI work, while a product studio combines strategic UX research, interaction design, and engineering capability to ship working products. Be cautious of agencies that have simply rebranded as product studios without adding real engineering muscle or end-to-end delivery experience.

Why do so many product design engagements fail?

Most failed engagements stem from poor selection rather than lack of talent — buyers often prioritize portfolio aesthetics over process maturity and engineering depth. Industry data shows over 60% of businesses outsourcing digital product work reported at least one failed engagement in the past two years, usually due to misaligned expectations and weak communication structures.

What should I look for in a product design agency portfolio?

Focus on shipped products rather than concept work, and look for case studies that detail outcomes, metrics, and the agency’s specific contributions. Strong portfolios demonstrate technical execution alongside design polish, include diverse industries, and are backed by verifiable client references who can speak to delivery reliability.