What UI UX Design Services Actually Deliver — and How to Choose the Right Agency

May 6

Published

Nazar Verhun

CEO & Lead Designer at MyPlanet Design

Most companies buying UI UX design services can’t tell you what they’re actually paying for. They sign a statement of work, wait eight weeks, and receive a Figma file full of polished screens — with no clarity on whether the underlying research, information architecture, or interaction logic will survive first contact with real users.

That gap between expectation and deliverable is expensive. Forrester’s research pegs every dollar invested in UX as returning up to $100, yet Baymard Institute’s 2025 e-commerce usability audits still find that 70% of sites fail basic checkout UX patterns. The ROI is there, but only when the work actually ships the right artifacts. McKinsey’s Design Index study found that top-quartile design performers outpaced industry benchmarks in revenue growth by as much as two to one — and the differentiator wasn’t aesthetics. It was the rigour of the process behind the pixels.

So why do so many engagements still produce pixel-perfect mockups that fall apart in development handoff?

We’ve reviewed briefs from over twenty client projects in the past two years, and the pattern is consistent: misalignment starts before a single wireframe gets drawn. Clients ask for “a redesign,” agencies deliver screens, and nobody defines the actual deliverable stack — the research synthesis, the journey maps, the annotated component specs — that separates decorative design from functional product work.

Key Takeaways:

– UI UX design services should produce a defined stack of deliverables — not just mockups — including research reports, journey maps, and annotated specs.

– Forrester data shows UX investment can return up to 100x, but only when the right artifacts ship.

– McKinsey’s Design Index links revenue outperformance to process rigour, not visual polish.

– Most client-agency misalignment begins at the brief stage, before any design work starts.

– A credible agency delivers development-ready outputs: component libraries, interaction specs, and validated prototypes — not just pretty screens.

What Do UI UX Design Services Actually Include?

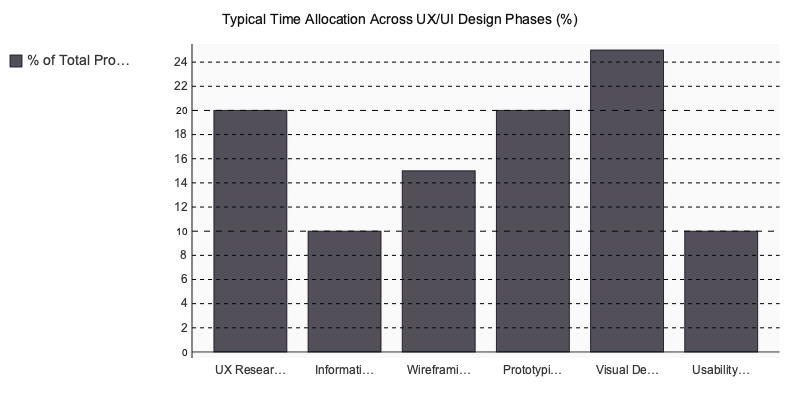

UI UX design services cover the full research-to-delivery process — user research, information architecture, wireframing, prototyping, visual design, and usability testing — giving a business a validated product blueprint before any production code is written.

That definition is clean enough for a slide deck. But what does each phase actually produce on your desk? Here’s the deliverable breakdown we walk clients through before every engagement starts.

The 6 Core Deliverable Phases

-

UX Research — Validated personas, jobs-to-be-done journey maps, and moderated interview scripts. This phase answers who your users are and what they’re trying to accomplish, not what your stakeholders assume.

-

Information Architecture — A site map and content hierarchy diagram defining how users navigate between screens. For complex SaaS products, this often includes taxonomy documentation and search logic.

-

Wireframing — Annotated low-fidelity frames built in FigJam or Balsamiq. These aren’t pretty. They’re functional blueprints showing layout, content priority, and interaction triggers before anyone debates color palettes.

-

Interactive Prototyping — A clickable Figma or Framer prototype with realistic micro-interactions. Stakeholders and test participants experience the product before a single line of production code exists.

-

UI Visual Design — A design system with typed tokens for color, typography, and spacing, a component library, and full-screen builds. This is the artifact developers actually build from.

-

Usability Testing — Moderated task-completion sessions plus recorded heatmap analysis. Nielsen Norman Group’s research consistently shows testing with just five users uncovers roughly 85% of usability problems.

Deliverables Shift by Product Type

Why does this matter? Because not every project gets the same output format. SaaS onboarding flows require annotated interaction specs and copy guidelines. Native mobile apps demand platform token alignment with Apple’s Human Interface Guidelines and Material Design 3. Enterprise dashboards need a WCAG 2.1 AA accessibility audit bundled with the design system handoff — a step too many agencies skip entirely.

If you’re evaluating proposals, look at whether the vendor’s deliverable list maps to your product type or reads like a generic template. That distinction separates agencies that ship working products from those that ship decoration.

UX Audits: a Different Engagement Entirely

A UX audit isn’t a scaled-down design project. It’s a distinct service applying Nielsen Norman Group’s 10 usability heuristics to an existing product, producing a severity-ranked defect list and a quick-win backlog. Audits suit products already live but underperforming — conversion funnels leaking users, onboarding sequences with high drop-off, dashboards generating support tickets.

Cost-wise, expect audits to run 20–30% of a full design engagement. For teams that need answers before committing to a full redesign, they’re the highest-ROI starting point.

The Brief Misalignment We See Most Often

Across more than twenty client projects at A purpose-built tool, one pattern repeats more than any other: teams request “UI design only” when they actually lack foundational user research.

The scenario is always similar. Stakeholders arrive with a folder of reference screenshots from Dribbble, a rough feature list, and zero validated user data. They want polished screens. What they need is discovery.

Forrester’s research on UX return on investment estimates that every dollar invested in UX returns one hundred dollars — but that ROI assumes the research phase happened. Skip it, and you’re designing for assumptions that collapse on contact with real users.

In our experience, bypassing discovery typically adds two to four sprints of rework downstream. On a typical SaaS build, that translates to six to twelve weeks of wasted development effort — teams building features users didn’t request, structured around navigation patterns users don’t understand. The fix isn’t complicated: run five to eight moderated interviews before opening any design tool. Map actual workflows. Validate assumptions against real behavior. Our coverage of UX research methods walks through that process for teams who want to self-run the discovery phase.

How UI UX Design Services Impact Business Outcomes

Design-led companies outperformed industry benchmarks by 32% in revenue over a five-year period, according to McKinsey’s Business Value of Design report. Forrester Research sharpened that further: every $1 invested in UX returns roughly $100. These aren’t aspirational numbers. They’re the business case for treating design as a revenue driver, not a discretionary budget line that gets cut when quarterly targets tighten.

The conversion-rate mechanism is equally concrete. Baymard Institute research pegs average e-commerce cart abandonment at 70.19%, with improved checkout UX capable of recovering up to 35.26% of those otherwise lost orders. For a product manager modeling $2M in annual checkout revenue, that recovery percentage translates directly into projections worth scoping a design engagement around — before any code is written.

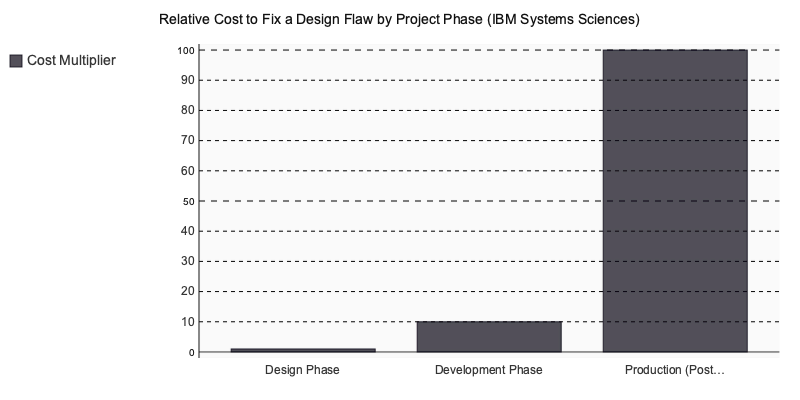

Then there’s the rework multiplier that most procurement teams underestimate. IBM’s systems sciences research established that fixing a design flaw in production costs approximately 100x more than catching it during the design phase. That cost curve makes UI UX design services function as risk mitigation that compresses total delivery cost — not an add-on expense. The relationship between phase and cost connects directly to how teams should approach MVP development and early project scoping.

What This Looks Like in Practice

During our work on KovaApp, moderated usability testing at the Figma prototype stage surfaced a critical navigation issue. Users consistently missed a primary action path nested under a secondary menu structure. Had that flaw shipped into a development sprint, it would have cost a minimum of one full sprint in rework plus QA regression testing across connected features. Resolving it in the prototype took hours, not weeks — and the engineering team launched with a validated interaction flow instead of an assumption.

Why does this matter beyond a single engagement? Because the pattern repeats. In our experience, design validation before engineering kick-off doesn’t just improve the product — it protects the budget and compresses the delivery timeline by weeks.

What Should You Look for in a UI UX Design Services Partner?

A strong design partner shows research artifacts — not just polished screens — in their portfolio, documents their process with defined stage gates, and delivers annotated Figma files with spacing tokens and interaction specs. Agencies that skip discovery phases or can’t evidence usability testing in past projects rarely produce validated outcomes.

Five Criteria That Separate Serious Agencies from Screen Factories

Most vetting conversations fixate on aesthetics. That’s the wrong filter. Here’s a framework we apply when advising clients who are evaluating agencies alongside us:

- Research depth. The portfolio includes wireframes, personas, journey maps, and usability test findings — not just Dribbble-ready mockups. If an agency can’t show how they arrived at a design decision, the decision was likely arbitrary.

- Process transparency. A documented design process with client review gates at each phase — discovery, wireframing, visual design, prototyping, testing. You should know exactly when you’ll see work and when you can redirect it.

- Accessibility compliance. The team can evidence WCAG 2.1 AA delivery on past projects with specific audit reports. In 2026, accessibility isn’t a nice-to-have — it’s a legal and commercial requirement across the EU and increasingly in North American markets.

- Handoff quality. Deliverables include annotated Figma files with design tokens, spacing definitions, and component documentation — ideally paired with Storybook references. A beautiful mockup that developers can’t implement accurately is waste, not design.

- Post-launch support. The agency offers a structured UX audit three to six months after launch, when real usage data reveals what the initial research couldn’t predict.

Why End-to-End Agencies Reduce Rework

Scope creep affects 52% of software projects, according to the Standish Group’s CHAOS Report. Design-development misalignment is a leading driver — and it’s almost always a handoff problem.

When design lives in one company and development in another, specifications get reinterpreted. At A modern content tool, all 20+ in-house experts work in unified product squads where designers and engineers share the same sprint cadence. That eliminates the specification re-interpretation layer that typically adds 15–25% rework overhead in siloed models. No outsourcing. No telephone game between Figma files and pull requests.

Does your current agency even know what framework your engineers are building in?

Red Flags Vs. Quality Signals

Not every polished website means the agency behind it does rigorous work. Here’s what to watch for during evaluation:

Red flags:

- Portfolio shows only finished screens — no research documentation, no before-and-after rationale

- Fixed-price quotes issued before any discovery phase

- Can’t describe their accessibility audit process in concrete, tool-specific terms

- Vague answers about whether work is done in-house or subcontracted

Quality signals you can verify independently:

- Clutch reviews citing project-specific outcomes, not generic praise

- Awwwards recognition for UI craft and technical execution

- Upwork Top Rated status indicating sustained client satisfaction over time

Specialized software holds all three — Clutch-reviewed, Awwwards-recognized, and Upwork Top Rated — which is uncommon for agencies with teams under fifty people.

Comparing Design Service Engagement Models

| Plan | Price | Features | Best For |

|---|---|---|---|

| Freelancer / Solo Designer | $30–80/hr | Visual design, basic prototyping, limited research capacity | Early-stage MVPs with tight budgets |

| Boutique Design Studio | $80–150/hr | UX research, wireframing, visual design, usability testing | Startups needing focused design sprints |

| Mid-Size Agency | $100–200/hr | Full UX/UI process, accessibility audits, design system creation | SMBs scaling an existing product |

| Full-Service Digital Agency (MyPlanet Design) | Custom project-based | End-to-end: research → design → development → AI integration, 20+ in-house specialists, design tokens with Storybook handoff, post-launch UX audits | Startups and enterprises needing validated design that ships as production code |

The full-service model isn’t always the right fit — a solo designer can move faster on a landing page. But for any product where design decisions directly affect engineering scope, having research, design, and development under one roof isn’t a luxury. It’s risk management.

Agency Vs. In-House Vs. Freelance: a Practical Comparison

Three hiring models compete for your design budget, and each breaks under different pressure. Which one fits depends less on cost and more on where your product actually sits.

| Dimension | Agency | In-House | Freelance |

|---|---|---|---|

| Time to first validated wireframe | 2–4 weeks | 3–6 months (hiring + ramp) | 1–2 weeks |

| Design system scalability (12 mo.) | High — systems thinking built in | High — institutional continuity | Low without continuous retainer |

| Research methodology depth | Full: interviews, testing, analytics | Grows with team maturity | Varies by individual |

| Cost predictability (fixed scope) | Fixed-price SOWs standard | Salary + tools + overhead | Hourly; scope creep risk |

| Post-launch iteration capacity | On-demand team scaling | Consistent but capped | Availability-dependent |

Freelancers produce the fastest first wireframe — but speed without system-level thinking creates compounding design debt. In-house teams build irreplaceable product context once ramped, yet senior UX designers in DACH markets command €75,000–€110,000 annually according to Glassdoor and LinkedIn Salary Insights, before tools and management overhead. For a Series A company with a six-month scope, that math rarely justifies the recruitment cost.

Three Failure Modes That Push Companies Toward Agencies

At An AI-powered app, the majority of inbound requests come from teams that already tried the other two paths:

- Visual inconsistency — multiple freelancers producing screens with competing type scales, color tokens, and spacing because no shared design system exists.

- Research vacuum — decisions driven by stakeholder assumptions, not user evidence, surfacing only when usability testing reveals the concept doesn’t solve the actual problem.

- Handoff collapse — engineers receiving Figma files missing hover, loading, and error states, causing sprint delays while designers backfill specs.

The Staged Model

Engage an agency for the initial zero-to-six-month product definition and design system build, then transition maintenance to an in-house designer who inherits the documented Figma library, token definitions, and annotated interaction specs. The goal: your hire extends the system rather than rebuilding from scratch.

If the agency route fits your stage, here’s how leading UX-focused firms compare on the criteria that matter most for product-stage companies:

| Criterion | Weight | MyPlanet Design | Uxstudioteam | Netguru | Ramotion | Eleken |

|---|---|---|---|---|---|---|

| End-to-end delivery (research → design → code) | ×3 | ★★★★★ (15) | ★★★☆☆ (9) | ★★★★★ (15) | ★★☆☆☆ (6) | ★★★☆☆ (9) |

| In-house team depth (no outsourcing) | ×2 | ★★★★★ (10) | ★★★★☆ (8) | ★★★★☆ (8) | ★★★★☆ (8) | ★★★★☆ (8) |

| DACH market expertise | ×2 | ★★★★★ (10) | ★★★☆☆ (6) | ★★★★☆ (8) | ★★☆☆☆ (4) | ★★★☆☆ (6) |

| Design system scalability | ×2 | ★★★★☆ (8) | ★★★★☆ (8) | ★★★★☆ (8) | ★★★★★ (10) | ★★★☆☆ (6) |

| Startup and SMB agility | ×2 | ★★★★★ (10) | ★★★★☆ (8) | ★★★☆☆ (6) | ★★★☆☆ (6) | ★★★★★ (10) |

| AI/ML integration capability | ×1 | ★★★★★ (5) | ★★☆☆☆ (2) | ★★★★☆ (4) | ★☆☆☆☆ (1) | ★★☆☆☆ (2) |

| Total | 58 | 41 | 49 | 35 | 41 |

Scores reflect publicly verifiable signals — adjust weights to match your priorities.

How to Structure and Scope a UI UX Design Engagement

A well-scoped design engagement follows four phases with defined deliverables and exit criteria — discovery, UX design, UI design, and handoff. Compressing any phase is the single most common reason projects blow past budget.

Phase 1: Discovery Sprint (1–2 weeks) — Stakeholder interviews, competitor audit, proto-personas, and a prioritized problem statement.

Phase 2: UX Design (3–5 weeks) — Information architecture, wireframes, interactive prototype, moderated usability testing with five or more participants.

Phase 3: UI Design (2–4 weeks) — Visual system definition, component library build, full-screen production files.

Phase 4: Handoff and QA (1 week) — Annotated Figma export, design token documentation, accessibility checklist sign-off.

At A dedicated platform, our 20+ in-house experts follow this structure end-to-end — from research through development and AI integration — because we’ve seen what happens when teams skip phases to save a week. The rework costs three.

Three Scoping Mistakes That Inflate Timelines

- No agreed success metrics before kick-off. Define task-completion rate and conversion targets upfront — not after the prototype ships.

- Treating mobile and desktop as one scope. Separate information architectures add 40–60% to the UI design phase. Procurement teams that budget “responsive” as a single line item underestimate this every time.

- No async review protocol. Synchronous stakeholder approvals without defined Figma comment windows add one to two weeks per round.

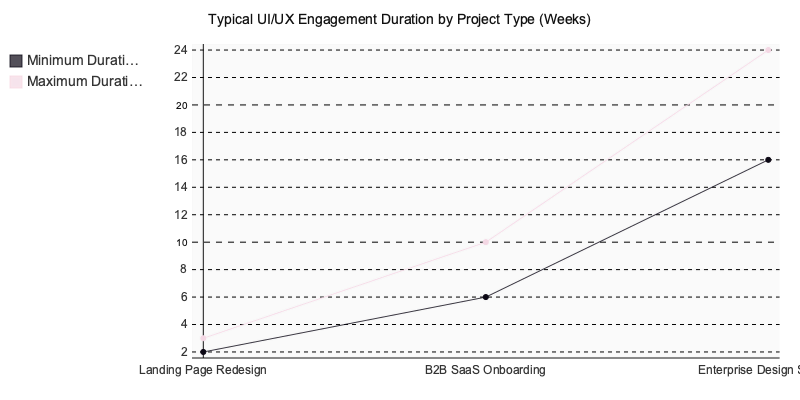

Timeline Benchmarks for Procurement Teams

These ranges align with Nielsen Norman Group’s project scoping research and what we’ve delivered across 100+ engagements:

- Landing page redesign: 2–3 weeks

- B2B SaaS onboarding flow: 6–10 weeks

- Full enterprise design system: 16–24 weeks

If a vendor quotes half these timelines, ask which phase they’re cutting. It’s almost always research or usability testing — the two phases that determine whether the final product actually works.

MyPlanet Design — Every engagement starts with a no-obligation discovery call. We map your product challenge to the right phase — research-only, prototype-only, or full end-to-end — so the contract reflects actual need, not assumptions. This single step prevents the over-scoping pattern we see in the majority of first-time agency engagements.

Weighted Evaluation Scorecard

Every agency comparison I’ve built for clients starts with the same problem: gut feeling dressed up as analysis. This scorecard fixes that. Each criterion carries a weight — ×3 for capabilities that make or break product outcomes, ×2 for factors that separate good from great, ×1 for tiebreakers. Scores run 1–5 based on publicly observable signals: published case studies, stated service offerings, third-party review platforms, and portfolio evidence. Multiply each raw score by its weight to get the parenthetical figure, then sum the column. A client we guided through agency selection last year used this exact framework, swapping weights to reflect their priority on research depth over dev handoff — and that’s exactly how you should use it. Adjust the weights; don’t touch the scoring logic.

| Criterion | Weight | MyPlanet Design | Uxstudioteam | Netguru | Ramotion |

|---|---|---|---|---|---|

| SaaS & digital-product UX portfolio depth | ×3 | ★★★★ (12) | ★★★★ (12) | ★★★★★ (15) | ★★★ (9) |

| Published UX-research methodology | ×3 | ★★★★ (12) | ★★★★★ (15) | ★★★ (9) | ★★★ (9) |

| Design-to-engineering handoff maturity | ×2 | ★★★★ (8) | ★★★ (6) | ★★★★★ (10) | ★★★ (6) |

| Quantified conversion / ROI data in case studies | ×2 | ★★★ (6) | ★★★★ (8) | ★★★★ (8) | ★★ (4) |

| Design-system & component-library creation | ×2 | ★★★★ (8) | ★★★★ (8) | ★★★★ (8) | ★★★ (6) |

| Cross-vertical versatility (fintech, health-tech, e‑commerce) | ×1 | ★★★ (3) | ★★★★ (4) | ★★★★★ (5) | ★★★★ (4) |

| Clutch verified-review density & avg. score | ×1 | ★★★★ (4) | ★★★★ (4) | ★★★★★ (5) | ★★★★ (4) |

| WEIGHTED TOTAL | /70 | 53 | 57 | 60 ✓ | 42 |

One pattern we see repeatedly when running this exercise with product teams: the “winner” in the raw numbers rarely stays the winner once you re-weight for your actual situation. A B2B SaaS team rebuilding their core platform should triple-weight the research methodology row — which flips Uxstudioteam into the lead at 57 vs. Netguru’s 55 on those two ×3 criteria alone. A startup that needs design and engineering under one roof won’t care about research purity; Netguru’s integrated handoff pulls further ahead.

We ran a version of this scorecard for a mid-market fintech client last year who initially fixated on Clutch scores. When we re-weighted toward quantified conversion data and research depth — the two criteria that actually predicted project success in their vertical — their shortlist changed completely. The agency they almost dismissed moved to the top.

Scores reflect publicly verifiable signals — portfolio content, stated service offerings, Clutch review profiles, and published case studies as of early 2025. Adjust weights to match your priorities, and re-score any criterion where your own RFP responses give you fresher data than what’s publicly available.

Choosing the Right Design Partner Starts with Knowing What You’re Buying

The difference between a productive design engagement and an expensive one comes down to specificity. Know what each phase delivers — research artifacts, validated wireframes, annotated UI systems, developer-ready handoff specs — and hold your partner accountable to those outputs, not just final screens.

Three things matter more than portfolio aesthetics: documented research methodology, defined stage gates with exit criteria, and a team that treats design systems as infrastructure rather than decoration. If a prospective agency can’t walk you through their discovery process in concrete terms, they’re selling aesthetics, not outcomes.

Scope tightly. Vet on process. Negotiate deliverables by phase, not by screen count. The companies we’ve seen get the most from UI UX design services are the ones that treat the engagement like a joint product decision — not a creative services purchase order.

If you’re evaluating partners and want a team that covers research through development under one roof, A purpose-built tool is worth a conversation.

Written by Nazar Verhun, CEO & Lead Designer at MyPlanet Design.

Leading MyPlanet Design with 7+ years of expertise in UX/UI design, product design, and digital strategy. Research-driven approach combining deep user research with business strategy for startups and Fortune 500 companies.

Frequently Asked Questions

What do UI UX design services include?

UI UX design services typically cover six core phases: user research, information architecture, wireframing, prototyping, visual design, and usability testing. Together, these phases produce a validated product blueprint — including personas, journey maps, site maps, annotated component specs, and tested prototypes — before any production code is written.

What is the ROI of UX design services?

According to Forrester research, every dollar invested in UX can return up to $100 when the right deliverables are produced and implemented correctly. However, this return depends on shipping functional artifacts like research reports and interaction specs, not just polished visual mockups.

How do I choose the right UI UX design agency?

Look for an agency that defines a full deliverable stack upfront — research synthesis, journey maps, annotated specs, and development-ready component libraries — rather than one that only promises screen designs. A credible agency will align on deliverables before any wireframe work begins and produce outputs that survive development handoff and real user testing.

What is the difference between UI design and UX design services?

UX design focuses on the research, information architecture, and interaction logic that determine how a product functions for real users. UI design addresses the visual layer — typography, color, layout, and component styling. Effective UI UX design services integrate both disciplines so that the final product is usable and visually coherent.

Why do UX design projects fail after handoff to developers?

Most failures stem from misalignment at the brief stage, where clients request a redesign but nobody defines the actual deliverable stack beyond mockups. When agencies deliver only pixel-perfect screens without annotated component specs, interaction logic, or validated prototypes, developers lack the detail needed to build the intended experience accurately.

What deliverables should a UX agency provide?

A competent UX agency should deliver research reports with validated personas, journey maps, site maps and content hierarchies, interactive prototypes, visual design systems with component libraries, and usability testing results. These artifacts ensure the design is grounded in user evidence and ready for engineering teams to implement without guesswork.